|

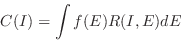

Although we use a spectrometer to measure the spectrum of a source,

what the spectrometer obtains is not the actual spectrum, but rather

photon counts ( ) within specific instrument channels, ( ) within specific instrument channels, ( ). This

observed spectrum is related to the actual spectrum of the source

( ). This

observed spectrum is related to the actual spectrum of the source

( ) by: ) by:

|

(2.1) |

Where  is the instrumental response and is proportional to the

probability that an incoming photon of energy is the instrumental response and is proportional to the

probability that an incoming photon of energy  will be detected in

channel will be detected in

channel  . Ideally, then, we would like to determine the actual

spectrum of a source, . Ideally, then, we would like to determine the actual

spectrum of a source,  , by inverting this equation, thus deriving , by inverting this equation, thus deriving

for a given set of for a given set of  . Regrettably, this is not possible in

general, as such inversions tend to be non-unique and unstable to

small changes in . Regrettably, this is not possible in

general, as such inversions tend to be non-unique and unstable to

small changes in  . (For examples of attempts to circumvent these

problems see Blissett & Cruise (1979); Kahn &

Blissett (1980); Loredo & Epstein (1989)). . (For examples of attempts to circumvent these

problems see Blissett & Cruise (1979); Kahn &

Blissett (1980); Loredo & Epstein (1989)).

The usual alternative is to choose a model spectrum,  , that can be

described in terms of a few parameters (i.e., , that can be

described in terms of a few parameters (i.e.,

), and

match, or “fit” it to the data obtained by the spectrometer. For each ), and

match, or “fit” it to the data obtained by the spectrometer. For each

, a predicted count spectrum ( , a predicted count spectrum ( ) is calculated and compared to

the observed data ( ) is calculated and compared to

the observed data ( ). Then a “fit statistic” is computed from the

comparison and used to judge whether the model spectrum “fits” the

data obtained by the spectrometer. ). Then a “fit statistic” is computed from the

comparison and used to judge whether the model spectrum “fits” the

data obtained by the spectrometer.

The model parameters then are varied to find the parameter values that

give the most desirable fit statistic. These values are referred to as

the best-fit parameters. The model spectrum,  , made up of the

best-fit parameters is considered to be the best-fit model. , made up of the

best-fit parameters is considered to be the best-fit model.

The most common fit statistic in use for determining the “best-fit”

model is  , defined as follows: , defined as follows:

|

(2.2) |

where  is the (generally unknown) error for channel is the (generally unknown) error for channel  (e.g., if (e.g., if

are counts then are counts then  is usually estimated by is usually estimated by  ;

see e.g. Wheaton et al. (1995)

for other possibilities). ;

see e.g. Wheaton et al. (1995)

for other possibilities).

Once a “best-fit” model is obtained, one must ask two questions:

- How confident can one be that the observed

can have been

produced by the best-fit model can have been

produced by the best-fit model  ? The answer to this

question is known as the “goodness-of-fit” of the model. The ? The answer to this

question is known as the “goodness-of-fit” of the model. The

statistic provides a well-known-goodness-of-fit

criterion for a given number of degrees of freedom ( statistic provides a well-known-goodness-of-fit

criterion for a given number of degrees of freedom ( , which is

calculated as the number of channels minus the number of model

parameters) and for a given confidence level. If , which is

calculated as the number of channels minus the number of model

parameters) and for a given confidence level. If

exceeds a critical value (tabulated in many statistics

texts) one can conclude that exceeds a critical value (tabulated in many statistics

texts) one can conclude that  is not an adequate

model for is not an adequate

model for  . As a general rule, one wants the “reduced . As a general rule, one wants the “reduced

” ( ” (

) to be approximately equal to one

(i.e. ) to be approximately equal to one

(i.e.

). A reduced ). A reduced  that is much

greater than one indicates a poor fit, while a reduced that is much

greater than one indicates a poor fit, while a reduced

that is much less than one indicates that the errors on

the data have been over-estimated. Even if the best-fit model

( that is much less than one indicates that the errors on

the data have been over-estimated. Even if the best-fit model

( ) does pass the “goodness-of-fit” test, one still cannot

say that ) does pass the “goodness-of-fit” test, one still cannot

say that  is the only acceptable model. For example, if the

data used in the fit are not particularly good, one may be able to

find many different models for which adequate fits can be found. In

such a case, the choice of the correct model to fit is a matter of

scientific judgment. is the only acceptable model. For example, if the

data used in the fit are not particularly good, one may be able to

find many different models for which adequate fits can be found. In

such a case, the choice of the correct model to fit is a matter of

scientific judgment.

- For a given best-fit parameter (

), what is the range of

values within which one can be confident the true value of the

parameter lies? The answer to this question is the “confidence

interval” for the parameter. The confidence interval for a given

parameter is computed by varying the parameter value until the ), what is the range of

values within which one can be confident the true value of the

parameter lies? The answer to this question is the “confidence

interval” for the parameter. The confidence interval for a given

parameter is computed by varying the parameter value until the

increases by a particular amount above the minimum, or

“best-fit” value. The amount that the increases by a particular amount above the minimum, or

“best-fit” value. The amount that the  is allowed to

increase (also referred to as the critical is allowed to

increase (also referred to as the critical  ) depends

on the confidence level one requires, and on the number of

parameters whose confidence space is being calculated. The critical

for common cases are given in the following table (from Avni 1976): ) depends

on the confidence level one requires, and on the number of

parameters whose confidence space is being calculated. The critical

for common cases are given in the following table (from Avni 1976):

| Confidence |

Parameters |

| |

1 |

2 |

3 |

| 0.68 |

1.00 |

2.30 |

3.50 |

| 0.90 |

2.71 |

4.61 |

6.25 |

| 0.99 |

6.63 |

9.21 |

11.30 |

HEASARC Home |

Observatories |

Archive |

Calibration |

Software |

Tools |

Students/Teachers/Public

Last modified: Tuesday, 28-May-2024 10:09:22 EDT

|